When Amazon quietly agreed to buy copper from the first new U.S. mine to come online in more than a decade, the headline read like a niche supply chain story.

Another tech giant hedging risk. Another materials deal buried beneath flashier AI announcements.

But that’s not what this move really signals.

Amazon isn’t buying copper because it suddenly cares about mining. It’s buying copper because the infrastructure required to support artificial intelligence is colliding with physical limits — limits that software, capital and ambition can’t wish away.

Copper sits at the center of that collision. It’s essential to data centers, power distribution, transformers, substations and transmission lines. Every megawatt of new AI capacity brings massive amounts of metal, wiring and coordination with it.

And unlike chips or code, copper doesn’t scale on demand.

The deal itself won’t meaningfully satisfy Amazon’s needs. Even optimistic production estimates from the Arizona mine represent only a fraction of what a single hyperscale data center consumes.

That’s the point.

This isn’t about supply security in isolation, but about what happens when the digital economy starts outrunning the systems that make it possible to build, power and operate it.

Amazon’s copper purchase isn’t a bet on materials as much as it’s an admission that the AI boom is running headlong into the physical world, and that the bottleneck is no longer computing power but execution.

AI’s timing problem

Artificial intelligence moves fast because it can.

New models train in months. New chips deploy in quarters, and cloud capacity expands modularly as demand rises.

The physical systems that support it don’t.

This is the core mismatch shaping the future of AI infrastructure. Technology advances on roughly 18-month cycles. Infrastructure operates on timelines measured in years, often decades.

Transmission lines routinely take six to 10 years to permit and build. New mines, on the other hand, can take nearly 30 years in the United States from discovery to production. Grid interconnection approvals in high-demand regions now stretch well beyond five years.

That gap isn’t theoretical, either, but it’s already reshaping where — and whether — projects move forward.

A data center can be designed and built in under two years. The electrical infrastructure required to serve it, however, may arrive long after the facility is ready to switch on.

In some regions, developers are pouring concrete and ordering equipment without knowing when — or if — sufficient power will be available. Capital sits stranded while approvals crawl forward.

This is why Amazon’s copper deal matters — because it reflects a growing realization among hyperscalers that infrastructure risk now lives upstream of technology decisions. By the time a power line is approved or a new material source comes online, the AI workload it was meant to support may already be obsolete.

The physical world isn’t built to win that race.

Infrastructure systems were designed for steady, predictable growth — not exponential growth in demand driven by AI. As technological change accelerates, delays that once felt manageable now compound into strategic constraints.

Miss a window, and a project doesn’t just run late. It risks irrelevance.

Copper is the canary

Copper isn’t scarce because demand surprised the market. It’s scarce because the systems that produce it were never built to respond quickly and can’t be retrofitted overnight.

That’s what makes copper such a useful lens for understanding the broader infrastructure challenge facing AI.

It’s non-substitutable at scale and deeply embedded in power and data systems, and it’s required in quantities that only become obvious once projects are underway.

Modern AI data centers are especially copper-intensive. High-density computing power, liquid cooling and redundant power systems all push material needs higher. On average, an AI training data center requires roughly 47 metric tons of copper per megawatt of installed capacity.

Over a facility’s lifecycle, that figure climbs further.

Multiply that across hundreds of megawatts, and the demand curve steepens fast.

The problem is that copper supply doesn’t bend to price signals on useful timelines. New mines take decades to develop. In the U.S., the process can stretch close to 30 years. Globally, declining ore grades and rising technical complexity slow expansion even more.

The result is a structural gap.

Forecasts already point to a multimillion-ton shortfall by 2040, even under optimistic assumptions. That gap shows up in elevated prices, long procurement timelines and strategic behavior like Amazon’s decision to secure supply directly.

Still, copper itself isn’t the real story but the proxy.

Every system AI depends on shares the same traits: heavy upfront investment, long approval timelines, limited substitution options and high coordination complexity.

Power transformers. Switchgear. Transmission corridors. Cooling infrastructure.

When demand spikes, these systems don’t scale. They strain.

Seen through that lens, Amazon’s copper deal isn’t about cornering a market but about buying certainty in a world where physical inputs have become gating factors.

And once materials become gating factors, every inefficiency downstream matters more.

Even when supply exists, projects still stall

Material shortages and permitting delays are easy targets because they sit outside the jobsite. Yet even when approvals are secured and materials are available, projects still lose time — and a surprising amount of it.

The culprit: execution friction.

Across construction, rework accounts for an estimated 9% to 20% of total project costs. Nearly a third of work performed on active jobsites is spent correcting errors rather than moving forward.

These aren’t edge cases, either; they’re systemic.

Data center construction magnifies the problem. Mechanical, electrical and plumbing systems dominate cost and complexity. Tight tolerances leave little margin for error.

A single clash discovered in the field rather than on a drawing can trigger cascading delays. Crews stop. Equipment sits idle. Schedules unravel.

At the root of much of this rework is bad information: outdated drawings, conflicting markups, incomplete submittals and misaligned assumptions.

Individually, these issues seem manageable. Collectively, they drag the entire system down.

In an environment where AI workloads evolve every 18 months, losing weeks or months to coordination failures isn’t just inefficient.

It’s strategic risk.

When timelines slip, projects don’t simply cost more, but they miss windows and arrive late to markets that have already moved on.

The uncomfortable truth: the industry doesn’t just lack materials — it leaks time.

And as physical constraints tighten, that leakage becomes harder to absorb.

The grid is already telling us the truth

If there’s any doubt that physical constraints have overtaken digital ambition, the power grid has been making the case.

In the U.S., grid interconnection queues have swollen to nearly 2,600 gigawatts of proposed capacity — more than twice the country’s total installed power plant fleet.

The system isn’t just congested but overwhelmed.

For data center builders, that means years of uncertainty. Projects that are otherwise ready to move forward stall while studies drag on. Grid operators in some regions have paused new connection requests entirely just to process existing backlogs.

Capital is committed. Sites are secured. Construction may begin.

Power, however, remains a question mark.

Europe faces similar constraints, particularly in long-established data center hubs.

In Dublin, for example, Ireland’s grid operator effectively imposed a moratorium on new data center connections due to capacity limits, allowing projects only under strict conditions. Amsterdam has also faced grid congestion that has slowed or paused development, while in Frankfurt, demand for power is already exceeding available grid capacity.

Across the region, long grid connection timelines — sometimes stretching up to seven years — are increasingly shaping whether projects move forward at all. Connection timelines stretch seven to 10 years, far longer than the typical construction cycle of a modern data center.

These aren’t future warnings as much as they’re present constraints shaping real investment decisions today.

The grid isn’t signaling what might happen if AI grows unchecked. It’s showing what happens when physical systems are asked to move at digital speed — and can’t.

From paperwork to critical infrastructure

As AI infrastructure pushes against physical limits, one reality becomes harder to ignore: how projects are planned, coordinated and delivered now matters as much as the materials themselves.

For decades, drawings, markups and approvals were treated as administrative artifacts — necessary, but secondary to “real work” in the field.

In a world of compressed timelines and thin margins for error, construction information has now become critical infrastructure.

When teams lack clarity — when they’re working from outdated drawings, conflicting markups or incomplete approvals — friction compounds. Crews hesitate. Work stops and starts. Rework spreads.

What once might have been a minor delay becomes a schedule-breaking problem.

The companies that perform best in this environment won’t be the ones that simply secure more materials or chase faster hardware cycles.

They’ll be the ones that reduce uncertainty.

Fewer handoff errors. Fewer version conflicts. Faster alignment between design intent and field execution.

This isn’t about adopting new tools for their own sake, but about recognizing that coordination failures now carry outsized consequences.

When copper is scarce, power is constrained and approvals take years, there’s far less room to absorb mistakes.

As the physical economy becomes the limiting factor for digital growth, execution discipline becomes a competitive advantage.

The real risk to AI isn’t innovation … it’s friction

Amazon’s copper deal isn’t an outlier.

It’s an early signal.

As AI infrastructure expands, more companies will move upstream — securing materials, power and capacity not because they want to, but because uncertainty has become too costly to ignore.

This is what happens when digital growth collides with physical systems that can’t move fast enough.

The danger isn’t that AI development slows but that it becomes uneven. Large players with the capital to absorb delays or pre-buy supply will keep moving. Others will wait in interconnection queues, navigate multi-year approvals and watch windows close.

The gap won’t be technological. It will be infrastructural.

The next phase of the AI economy won’t be defined solely by faster models or more powerful chips, but by how quickly the physical world can respond — and how much waste we’re willing to tolerate along the way.

In that reality, the teams that succeed won’t just build more.

They’ll build with clarity, coordination and discipline, treating execution not as an afterthought, but as the infrastructure that makes everything else possible.

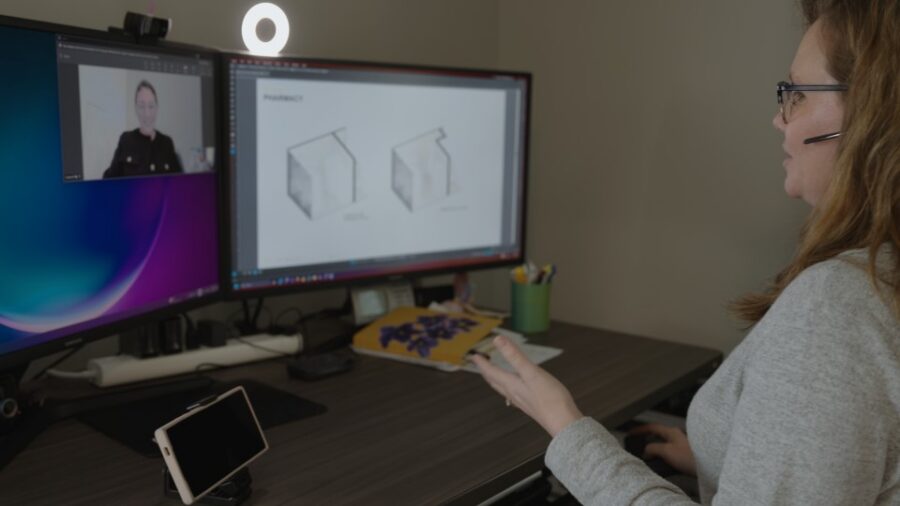

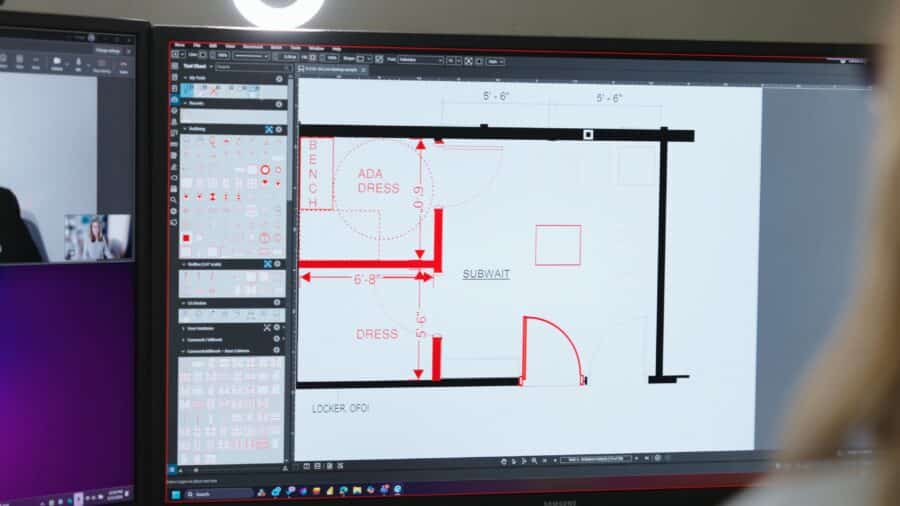

How does Bluebeam help reduce execution friction on complex infrastructure projects?

Bluebeam helps teams align around a single, trusted set of drawings and documents. By centralizing markups, measurements and revisions in real time, it reduces the version conflicts and information gaps that drive rework, delays and downstream coordination failures on high-stakes projects.

Why does construction information matter more as AI infrastructure timelines compress?

When material supply, power access and approvals are already constrained, there’s little tolerance for mistakes. Bluebeam treats drawings and approvals as operational infrastructure, helping teams surface issues earlier, coordinate faster and keep execution aligned with design intent as schedules tighten.

How does Bluebeam support data center and power-intensive builds?

Data centers concentrate complexity in electrical, mechanical and coordination-heavy scopes. Bluebeam enables detailed reviews, clash identification and field-to-office communication across those systems, helping teams catch problems digitally before they stall work in the field or strand capital.

Does Bluebeam replace other construction or project management platforms?

No. Bluebeam complements project management, BIM and ERP systems by strengthening the layer where most execution friction lives: drawings, documents and collaboration. It integrates into existing workflows, improving clarity and coordination without forcing teams to rebuild their tech stack.

What makes Bluebeam relevant as physical constraints become the bottleneck?

As materials, power and permitting become gating factors, the competitive edge shifts to execution discipline. Bluebeam helps teams waste less time, absorb less rework and move with greater certainty — turning coordination from an afterthought into an advantage.

Image created using generative AI.

Build faster when every drawing and decision actually lines up.