Most quantity takeoffs don’t fail during the first measurement pass. They fail later when the drawings change.

At bid time, everything looks solid. Quantities check out. Pricing feels competitive. The estimate goes out the door. Then an addendum drops. A slab thickens. A wall type shifts. A scope clarification lands late Friday afternoon. Suddenly, what looked airtight starts to leak.

That’s the real stress test of a takeoff — not how fast it was produced, but how well it handles change.

Revisions are unavoidable. Treating them like edge cases is one of the most common — and expensive — mistakes in estimating. The difference between takeoffs that hold up and those that unravel rarely comes down to effort or experience.

It comes down to structure.

A quantity takeoff is the process of measuring and listing material quantities directly from construction drawings — the foundation every estimate is built on. When those drawings change, the takeoff must change with them. If it can’t, the estimate drifts. If it can’t update quickly and accurately, teams end up guessing, and guessing creates risk and lost money. The question isn’t whether drawings will be revised. They always are. The question is whether your workflow was built to absorb it.

Why do drawing changes break so many takeoffs?

Revisions rarely introduce new complexity; they instead expose weaknesses already embedded in how quantities were captured, organized and traced. When takeoffs are built as if drawings are final, even minor changes force disproportionate rework. Failure isn’t about change itself, but about how prepared the workflow is to absorb it.

Addenda don’t create chaos. They reveal it.

Too many takeoffs are built as if the drawings are final, even when everyone knows they aren’t. Quantities get measured fast. Assumptions sneak in early. Data moves downstream before it’s stable. When revisions arrive, teams aren’t adjusting but rebuilding.

That’s when accuracy slips. That’s when confidence erodes. And that’s when estimating turns reactive instead of controlled.

A takeoff that can’t be revised cleanly wasn’t finished. It was fragile.

Why do drawing changes break so many takeoffs in practice?

Most revision failures follow predictable structural patterns: unclear quantity definitions, weak organization and lost traceability between drawings and numbers. These issues stay hidden during initial takeoff but surface immediately when scope shifts. The more assumptions embedded early, the harder it becomes to isolate what truly changed.

Most revision failures follow the same patterns. They stay hidden until the drawings move.

The most common takeoff breakdown triggers include:

- Time pressure and last-minute addenda that force rushed, manual updates — where errors sneak in fast and get caught late.

- Working from outdated drawing sets when version checks are skipped — the single most avoidable source of rework.

- Miscalibrated digital scales — a single wrong calibration can introduce roughly 10% quantity error across an entire sheet.

- Decentralized files — takeoffs saved on personal drives with no audit trail mean teams repeat the same mistakes project after project and have no way to prove what changed or when.

Quantities aren’t clearly defined: In many workflows, quantity takeoffs get contaminated early. Waste factors, allowances and procurement logic are baked into measurements before pricing even starts. When a revision hits, it’s no longer clear what was measured and what was assumed.

If slab thickness changes, which number needs to move? The geometric quantity? The waste-adjusted total? The priced value buried three steps downstream? Without clean separation, every revision turns into a guessing game.

Organization comes too late — or not at all: Inconsistent naming, mixed layers and improvised groupings make initial takeoffs harder to review and revisions harder to isolate. Instead of updating a specific system or floor, estimators end up combing through entire datasets trying to figure out what changed.

When structure is missing, small revisions snowball into major rework.

Quantities lose their visual tie to the drawings: Once numbers move into spreadsheets or estimating systems, their connection to the drawing often weakens. Markups stop reflecting current scope. Reviews shift from visual verification to trust-based reconciliation.

At that point, no one is fully confident which quantity is right and proving it burns time teams don’t have.

Data gets copied too early: Manual exports and copy-and-paste workflows introduce version drift almost immediately. When quantities change at the source but not everywhere else, teams spend more time reconciling numbers than evaluating impact.

Revisions should trigger adjustments. Too often, they trigger audits.

What do revision-resilient takeoffs do differently?

Teams that handle revisions well don’t rely on speed or heroics. They design takeoffs to expect change — by keeping quantities clean, visible and layered. Structure limits how far a revision can ripple, turning what could be a rebuild into a controlled update.

Teams that handle revisions without panic don’t have better luck. They have better structure. They design takeoffs for change, not just speed.

They keep the quantity takeoff clean: Revision-resilient workflows treat the takeoff as a stable foundation. Net quantities only. No waste. No pricing logic. No procurement assumptions.

That separation matters. When drawings change, estimators update what the drawings show — nothing more. Downstream logic adjusts without contaminating the base data.

When quantity, material strategy and pricing stay layered, changes stay contained.

They keep quantities visible: Every measurement stays visible on the drawing. Layers are used deliberately to isolate scope by trade, system or phase. Color makes coverage obvious.

Visual verification becomes the fastest revision check. If an area isn’t marked, it likely wasn’t measured — or updated.

This is where digital workflows outperform spreadsheets. Review doesn’t depend on trusting totals. It happens directly on the drawings.

They use overlay and comparison tools to isolate deltas: Overlay tools superimpose a new drawing over the prior version so differences jump out visually — without combing through every sheet manually. Instead of re-measuring the entire plan, estimators can isolate only the areas that changed, which can reduce hours of revision work to minutes.

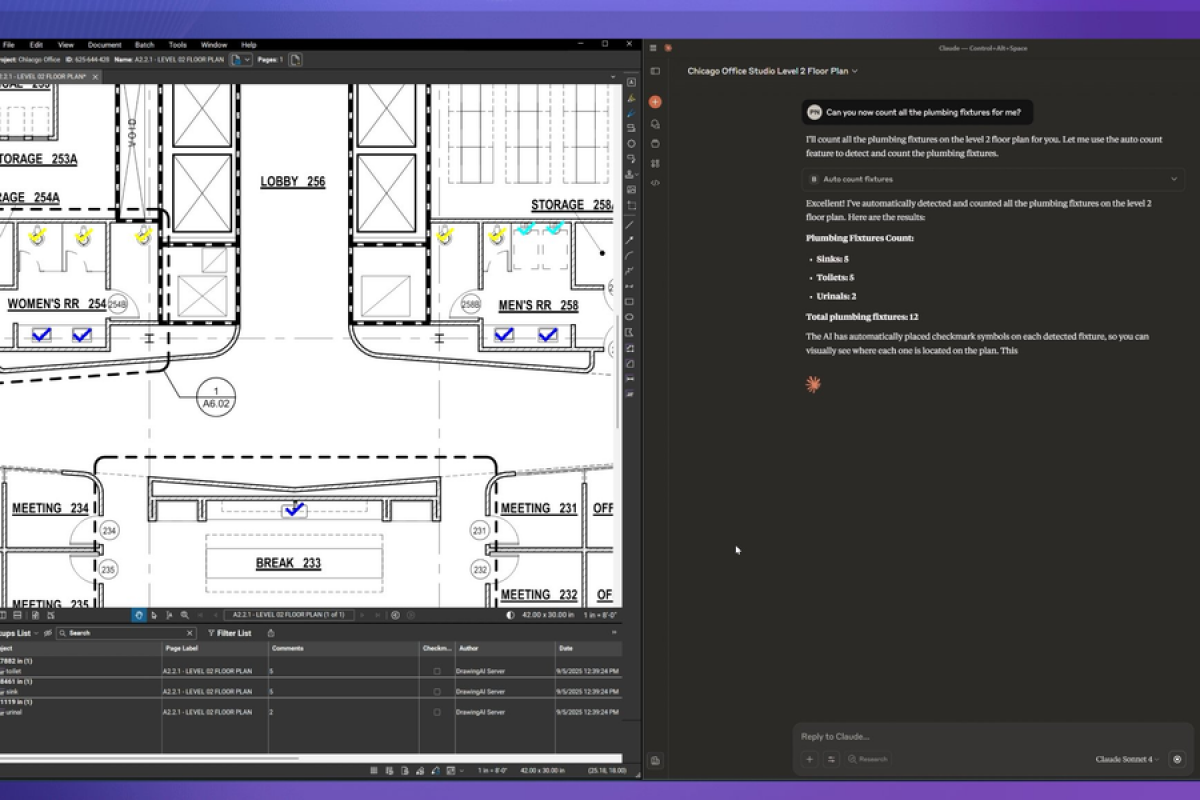

Tools like Bluebeam include drawing comparison features built specifically for this workflow, letting teams generate side-by-side views of old vs. new sheets and flag only the affected quantities. It’s the difference between a surgical update and a full rebuild.

They organize for change, not just cleanliness: Revision-resilient takeoffs aren’t just tidy but are structured to limit blast radius.

Quantities are grouped so changes affect specific slices of scope, not the entire estimate. A revision to one system doesn’t force a rebuild of everything else.

That upfront discipline can feel slower. It pays off every time drawings shift.

They update quantities at the source: When revisions arrive, disciplined teams update measurements where they live — on the drawing. Downstream systems follow the updated data instead of chasing it across disconnected files.

This “update once, let everything else follow” approach prevents version drift and keeps the takeoff, estimate and budget aligned.

On BIM-enabled projects, that logic goes further: A live BIM link creates a direct connection between the 3D model and the takeoff data, so quantities adjust automatically when the model changes. Drawings and estimates update together rather than requiring manual reconciliation after every design iteration. For teams working on complex or fast-moving projects, BIM integration for takeoffs isn’t a luxury; it’s the only way to keep pace with design changes without burning estimator hours on cleanup.

Why are full takeoff rebuilds a warning sign?

Rebuilding an entire takeoff after an addendum usually signals fragile structure, not unavoidable complexity. When quantities, organization or traceability fail early, revisions feel catastrophic. In resilient workflows, change prompts targeted updates, not a reset.

Rebuilding an entire takeoff after an addendum isn’t normal. It’s a signal.

It usually means quantities weren’t clearly defined, structure was inconsistent or traceability was lost early. Time pressure makes rebuilds feel inevitable, but they’re often symptoms of fragile workflows, not unavoidable complexity.

In resilient workflows, revisions don’t trigger panic. They trigger a process: isolate the change, update the affected quantities, review the impact and move forward.

Adjustments beat rebuilds. Every time.

How do structured takeoffs change the estimator’s role?

When takeoffs are structured for revision, estimators spend less time re-measuring and more time evaluating impact. Judgment replaces firefighting. Experience shows up in understanding scope risk, cost implications and downstream effects — not in scrambling to reconcile numbers.

When takeoffs are structured, revisions shift where estimators spend their time.

Instead of re-measuring everything, estimators focus on validating scope, assessing impact and applying judgment where it matters. Experience shows up not in clicking faster, but in understanding how changes affect cost, schedule and risk.

Modern tools can surface changes quickly. They don’t replace accountability. Estimators still decide what counts, what doesn’t and what needs clarification.

Structure creates room for judgment. Without it, even experienced teams end up firefighting.

What does this mean for teams under constant bid pressure?

Revision-resilient takeoffs change how teams respond under pressure. Faster responses come from clarity, not haste. When quantities are traceable and visible, scope discussions sharpen, pricing adjustments speed up and confidence carries through award and handoff.

Revision-resilient takeoffs change how teams operate when the pressure is on.

They respond to addenda faster — not because they rush, but because they aren’t untangling their own work. Pricing adjustments are clearer. Scope conversations are sharper. Handoffs to project teams carry fewer question marks.

Confidence improves, too. When quantities are visible, traceable and cleanly separated, teams don’t second-guess themselves after award. They know where the numbers came from and how they changed.

Why should takeoffs be built for change, not ideal drawings?

Drawings will change. Scope will shift. Clarifications will arrive late.

The only real question is whether your takeoff workflow amplifies disruption or absorbs it.

Takeoffs built for speed alone crack under pressure. Takeoffs built with structure, visibility and discipline hold up — and make estimating less reactive, not more.

That isn’t about features but about designing workflows that treat change as expected, not exceptional.

Because in estimating, the work that lasts isn’t the fastest but the work that still makes sense when everything else moves.

Here’s a practical audit to run on your current workflow: Are your quantities cleanly separated from waste factors and pricing logic? Do your layers isolate scope by trade, system or phase? When a revision arrives, can you identify the affected area on the drawing without combing through the whole estimate? Is there a version-controlled document library with a complete revision history, or are takeoffs living on personal drives? If any answer is no, the next addendum will cost more than it should.

Bluebeam is built for exactly this kind of structured, revision-ready workflow — with purpose-built digital takeoff tools, overlay and comparison features, customizable layers and cloud-based collaboration that keeps quantities and drawings in sync.

Bluebeam Takeoff & Revision FAQ

How does Bluebeam help teams manage takeoff revisions?

Bluebeam keeps quantities tied directly to the drawing through visible markups and structured layers, making it easier to isolate changes and update measurements at the source rather than rebuilding downstream data.

Why is visual traceability important during revisions?

Visual traceability allows estimators to verify scope changes directly on the drawing instead of relying on abstract totals. This reduces reconciliation time and increases confidence when quantities shift.

Can Bluebeam separate base quantities from pricing assumptions?

Yes. Bluebeam supports clean quantity takeoffs that remain independent from waste factors, pricing logic or procurement strategy, allowing downstream estimating tools to adjust without corrupting the source data.

How do layers improve revision control in takeoffs?

Layers let teams organize quantities by system, trade, phase or scope segment, limiting how far a revision can ripple and making updates faster and more targeted.

Is Bluebeam suitable for high-volume addenda environments?

Bluebeam is designed for iterative review and revision workflows, helping teams manage frequent drawing updates without losing alignment between quantities, markups and estimates.

Why do small takeoff errors cause major project problems?

Small mistakes in takeoff calculations — misread scales, duplicated items, missed specification changes — compound across project phases. A quantity that’s off by 10% at bid can mean material shortages in the field, on-site adjustments that delay the schedule, and cost overruns that erode the margin a team worked hard to protect. The earlier the error enters the workflow, the further it travels before anyone catches it.

What manual pitfalls most often break takeoff reliability during revisions?

The most consistent offenders are working from outdated plan sets when version checks are skipped, miscalibrating digital scales (one wrong calibration can introduce roughly 10% quantity error across a sheet), and saving takeoffs on personal drives rather than a centralized system. Without shared, versioned storage, there’s no audit trail — which means teams repeat the same estimating mistakes from project to project with no way to learn from them.

How much time and cost do manual revisions typically add?

On mid-sized projects, manual revision workflows can double or triple the time required to update a takeoff compared to structured digital processes. Beyond the direct labor cost, manual updates delay bid responses, increase the risk of pricing errors carrying through to award, and create the kind of version drift that requires reconciliation sessions no one has time for. Construction firms that embrace structured digital workflows — with proper revision controls, centralized documentation and live comparison tools — build in the predictability needed to protect margins and maintain cash-flow clarity, especially as labor shortages and budget pressures intensify across the industry.

Get the full playbook for takeoffs that survive revisions.