In April 2021, a framing lumber package that cost $35,000 doubled to $71,000 — for the same house, same neighborhood, same floor plan. Builders with no price escalation clauses, no supplier guarantees, no hedge got crushed. Lumber had risen more than 300% from pre-pandemic levels. The industry adapted because the signal was legible. Painful, but legible.

Now there’s a different kind of supply problem. And this one doesn’t show up on a commodity index.

Since late March 2026, Anthropic — maker of Claude, one of the most widely used AI platforms in professional workflows — has been actively rationing the resource its tools run on during peak hours: 5 to 11 a.m. Pacific Time on weekdays. Which is, for the record, exactly when most project teams are starting their day.

The resource being rationed isn’t copper. It isn’t lumber.

It’s tokens — and if your firm is using AI to review specs, process RFIs or analyze change orders, you’re consuming them whether you know it or not. Most construction firms have no idea what that means. That’s the problem.

Even for firms that believe in the promise of AI in our industry, as we do, it’s important to spread awareness of potential problems before it’s too late.

What a Token Is

A token is a chunk of text — roughly 75 words per 100 tokens. When you send something to an AI tool, it doesn’t just process your question. It reads your question plus any attached documents plus whatever instructions are baked into the software, then generates a response. Every piece of that burns tokens.

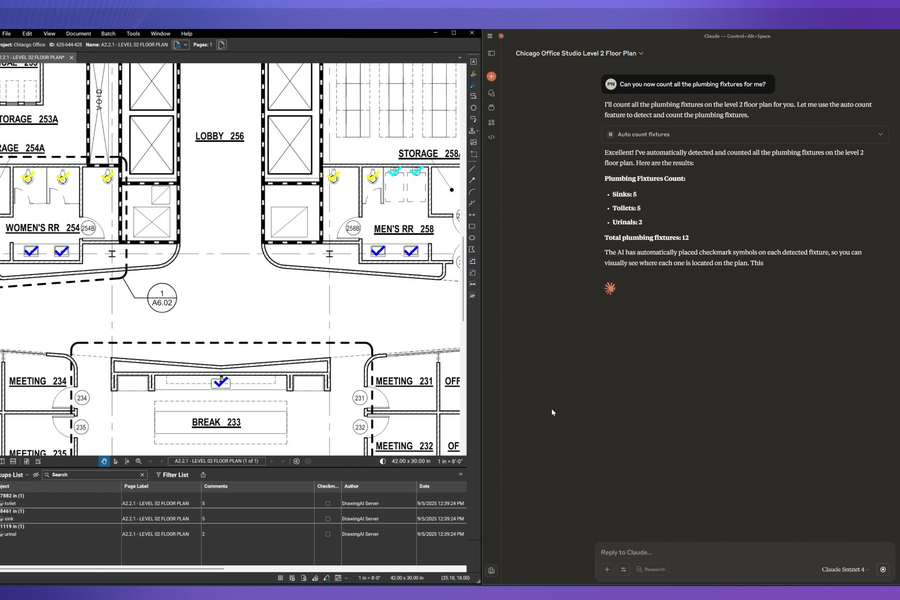

A simple chatbot query runs 500 to 2,000 tokens. Fine. But think about what construction firms are ultimately doing with AI: reviewing a 50-page spec, cross-referencing drawings, drafting an RFI response. That’s an agentic task — multi-step, document-heavy, iterative. Agentic AI tasks burn 5 to 30 times more tokens than simple chat interactions. A complex document review can run 50,000 to 200,000 tokens.

The analogy that works: kilowatt-hours. You don’t see them, don’t think about them — until the grid gets stressed and the utility calls it a brownout. That’s what’s happening right now, except it’s not the power grid. It’s the AI infrastructure your workflows are running on.

The Rationing Is Real, and It’s Already Happening

The Wall Street Journal reported on April 12 that the AI gold rush is rapidly drying up the supply of computing power. The data is specific.

The uptime problem. As of April 8, Anthropic’s Claude API had a 98.95% uptime rate over 90 days. Consumer Claude.ai: 98.68%. The enterprise standard is 99.99%. That gap means roughly 46 extra hours of potential downtime per year versus what production software is supposed to deliver.

The throttling. When Anthropic announced peak-hour rationing, a company staffer acknowledged roughly 7% of users would hit limits they’d never hit before. One developer burned through his Claude Code limit in 45 minutes — previously going weeks without hitting it. A Claude Max subscriber at $200/month hit quota exhaustion in 19 minutes.

The enterprise response. Retool’s CEO said he considers Anthropic’s Opus model the best available for enterprise — and switched to OpenAI anyway. “Anthropic has just been going down all the time,” they told the Journal. Since mid-February, enterprise clients have been gently migrating.

Imagine your concrete supplier announcing deliveries might not happen between 7 and 10 a.m. weekdays. And sometimes the trucks don’t show. You’d have a backup supplier by Thursday. Does your firm have a backup AI?

You Know What Lumber Costs. You Have No Idea What Tokens Cost.

NAHB surveyed members: when lumber spiked in 2021, construction firms had a problem they could see and measure. Forty-seven percent added price escalation clauses to contracts. Twenty-nine percent pre-ordered to lock in prices. The industry adapted because the signal was legible.

Token scarcity doesn’t work like that. There’s no futures market. No procurement manager whose job is to source compute. No weekly spot price index. When the AI gets throttled at 9 a.m. on a submittal deadline, it doesn’t show up as a line item — it shows up as a project manager staring at a spinning wheel, burning crew time trying to figure out if it’s her internet connection or a capacity decision made 2,500 miles away.

No major construction AI platform publishes an AI-specific uptime SLA or token consumption guarantee. Procore’s platform SLA commits to 99.9% uptime but explicitly excludes third-party dependencies — which is what its Copilot AI runs on. Autodesk’s uptime target is a goal, not a guarantee. And Microsoft’s Copilot terms describe the product as “for entertainment purposes only.” That’s in the terms of service. For a tool firms are weaving into bid workflows.

You know what a concrete pour costs per hour when a crew is standing around. You have zero visibility into what it costs when your AI goes down on a submittal deadline. That gap — between operational dependency and operational awareness — is where the next wave of construction risk is quietly building.

The Early Adopter Trap

The firms most exposed to the token crunch aren’t the laggards. They’re the early adopters — the ones who listened, who did the organizational work to integrate AI into document review, RFI processing, change order analysis. The ones who built real workflows on top of these tools.

They’re also the ones who now have operational dependencies on a supply chain they don’t control, can’t see and have no contingency plan for.

A 2025 Infosys survey of 1,502 executives found 95% had experienced at least one problematic AI incident in the prior two years. Seventy-seven percent of the time, the damage showed up as direct financial loss. Construction has barely started feeling it — because most firms haven’t yet built the deep workflow dependencies that create real exposure. They will. And the firms that built first will feel it first.

Three Things to Do Before the Next Throttled Tuesday

- Know what you’re running on. Ask your AI vendors — in writing — whether their SLA commitments cover AI features specifically. The answer, or the absence of one, tells you something important about your risk exposure.

- Build redundancy like it’s infrastructure. An emerging class of AI gateway and routing tools — Portkey, Not Diamond, to name a few — now provides multi-provider failover for enterprises. Two independent AI providers at 99.3% uptime, operating as failover, drops the probability of simultaneous outage to 0.005%. Same logic as backup generators. The tools exist.

- Watch the token economy. Portkey’s production data shows average token consumption per request has quadrupled in a single year. As agentic AI deepens into construction workflows, the rationing pressure deepens with it. Venture investor Tom Tunguz put it plainly: “The age of abundant AI is over, and it will remain so for years.”

The lumber spike of 2021 added $35,872 to the cost of an average new single-family home. It hurt. But it was visible. You could put a number on it, add a clause, find a hedge.

Token scarcity is the same structural problem — scarce resource, surging demand, supply chain that can’t respond fast enough — dressed in invisible clothes. You can’t see it until the spinning wheel shows up on a deadline morning. By then the cost is already being absorbed somewhere: crew time, project delay, a project manager doing manually what the AI was supposed to do.

We wrote earlier this year about why the AI boom keeps hitting a physical wall — copper, power grids, permitting timelines. That piece was about why we can’t build enough data centers fast enough. This one is about what happens when the data centers that exist still can’t keep up.

Tokens aren’t copper. But right now, they’re behaving a lot like it did in May 2021. The firms that figure that out before it costs them are the ones that will be glad they read this on a Tuesday morning — when the AI was still working.

The firms that thrive through supply chain disruptions are the ones that plan ahead. The same applies to AI. Bluebeam Max gives your team AI-powered tools built for construction — with multi-model flexibility designed to keep work moving, no matter what’s happening upstream.